Remember when creating an avatar meant spending hours customizing a video game character with limited hairstyles and outfits? Those days feel like ancient history. In 2026, avatar makers have evolved into sophisticated platforms capable of generating photorealistic digital humans that can speak, gesture, interact with objects, and even represent us across the digital landscape—all in seconds rather than weeks.

The numbers tell a staggering story. The digital avatar market, valued at $20.13 billion in 2025, is projected to grow at an explosive 50.4% CAGR to reach $792.6 billion by 2034 . This isn’t just about fun profile pictures anymore. Avatars have become persistent “owned channels” for brands, essential tools for creators, and increasingly sophisticated partners in enterprise workflows .

What Makes an Avatar Maker in 2026?

Today’s avatar makers combine three core technologies that work in harmony :

Generative models using diffusion transformers—the same architecture powering tools like Sora and Midjourney—create realistic faces, bodies, and animations by learning from vast video datasets of human movement and expression.

Text-to-speech and voice cloning have moved far beyond robotic narration. Modern systems use neural voice synthesis that captures prosody, emotion, and accent, with some platforms offering over 100 voice options across 70+ languages .

Lip synchronization and facial motion models match mouth movements to audio with frame-level precision. Advanced systems like Creatify’s Aurora model generate full-body expressiveness including hand gestures, natural eye contact, head tilts, and breathing—not just moving lips .

The Avatar Ecosystem: Finding Your Perfect Match

The avatar landscape has diversified dramatically. Here’s how different platforms serve different needs.

Performance Advertising Powerhouses

For marketers who need to test dozens of ad variations weekly, specialized platforms have emerged. Creatify automatically converts product URLs into multiple UGC-style video ads, analyzing product pages to generate scripts and select relevant visuals. Its batch mode can produce 10-20 variations from a single URL, testing different hooks, avatars, and calls-to-action simultaneously. With support for 75+ languages and AI-driven insights suggesting which creative elements perform best, it’s become essential for e-commerce brands and agencies .

Arcads takes a different approach with over 1,000 controllable AI actors and emotion control via text prompts. Want an avatar to appear “excited,” “skeptical,” or “calm”? You can specify the performance style. Avatars can hold products, display apps on screen, and interact with props, creating product demonstrations that feel more authentic than simple talking heads .

Higgsfield targets SaaS companies needing consistent “brand ambassador” avatars across help pages, landing pages, and paid ads. Its product-to-video workflow excels at “how it works” explanations, with plans ranging from $9 to $149 monthly .

Enterprise Training and Communication

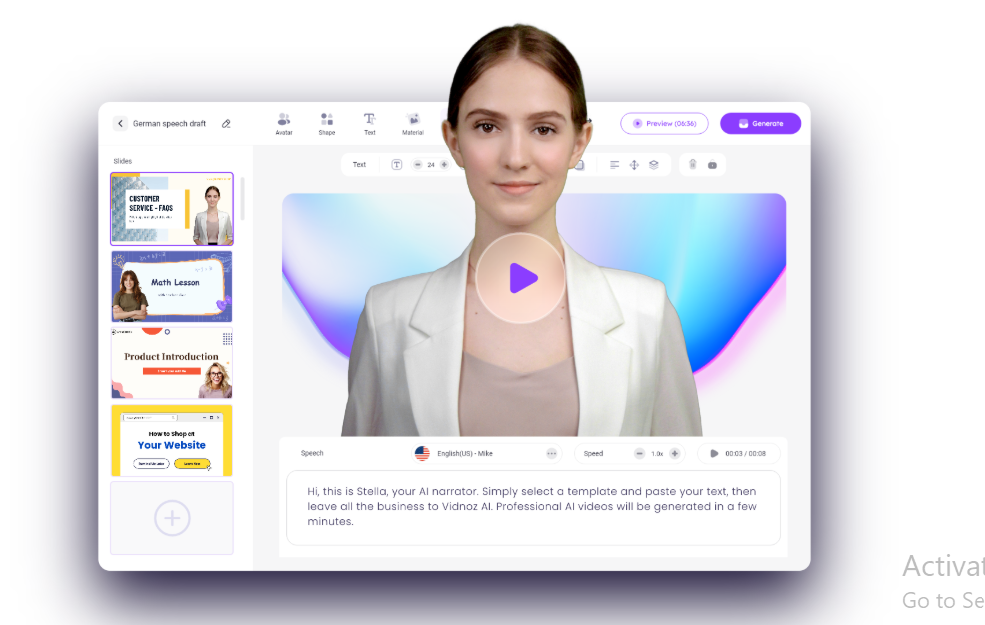

When reliability and compliance matter more than cutting-edge realism, enterprise platforms dominate. Synthesia pioneered AI avatar video for training and corporate communications, offering extensive stock avatar libraries, custom avatar creation, and support for 140+ languages with script-to-video workflows. SOC 2 and GDPR compliance make enterprise adoption straightforward .

Colossyan tailors specifically for e-learning and compliance, with 150-200+ avatars, 600+ voices, and built-in interactivity including quizzes and branching scenarios. SCORM export and LMS-friendly workflows integrate with existing training infrastructure .

HeyGen balances versatility with accessibility, handling explainer videos, marketing content, and multilingual translation across 175+ languages with lip synchronization. Its new Video Agent 2.0 shows users a complete creative blueprint before rendering, allowing refinement through natural conversation .

The Speed Revolution: 15-Second Avatars

Perhaps the most dramatic simplification comes from HeyGen, which rebuilt its avatar creation flow from the ground up. The old process took minutes, asked too many questions, and most people didn’t finish. Now it takes 15 seconds .

Users simply turn on their webcam, follow a short guided prompt, and record for 15 seconds. That single recording captures appearance, voice, motion, and consent—everything needed to start making videos immediately. No lighting setup, no script to read, no multiple takes. And this isn’t a stripped-down version—it’s a real starting point that grows in quality as users add more footage over time .

Breaking New Ground: Avatars That Interact

The most exciting research breakthrough comes from InteractAvatar, a novel dual-stream framework that enables talking avatars to perform grounded human-object interaction .

Previous methods were restricted to simple gestures, but InteractAvatar can perceive the environment from a static reference image and generate complex, text-guided interactions with objects while maintaining high-fidelity lip synchronization. The system addresses the “Control-Quality Dilemma”—the historical challenge of grounding actions in scenes without losing video fidelity when complex motions are required .

Through its dual-stream architecture, Avatar Maker can understand prompts like “Pick up the apple on the table” and generate coherent video of an avatar performing that action. The framework supports multimodal control with any combination of text, audio, and motion inputs, and can generate avatars that talk, act, and interact with objects .

With inference code released in January 2026 and pretrained checkpoints initialized from Wan2.2-5B, this open-source project points toward a future where avatars aren’t just talking heads but embodied digital beings capable of meaningful interaction with their environment .

Fun and Creative Applications

Not every avatar needs to be business-focused. MyEdit has emerged as a leading option for creators who want to participate in viral trends. The platform enables everything from “Italian Brainrot” characters with exaggerated hand gestures to AI-generated Chibi figures with oversized heads and adorable expressions .

Users can animate static photos into dance videos, create “squish” effects that distort images for hilarious reactions, transform pets into humanized versions, or turn themselves into action figures. These playful applications demonstrate how avatar technology has become accessible to everyone, not just enterprise users .

Platform Integration: Avatars Go Mainstream

The most significant validation of avatar technology comes from platform integration. YouTube announced in its 2026 product roadmap that creators will soon be able to produce Shorts using their own AI avatars .

CEO Neal Mohan emphasized that “AI technology should be a tool that expands creators’ creativity,” positioning AI as a creative partner rather than a replacement. Creators will retain full control over their own AI avatars, with clear labeling of AI-generated content mandatory and protective measures implemented to prevent misuse of deepfakes .

Remarkably, over one million channels are already using AI-powered creation tools every day, demonstrating just how central AI has become to the YouTube ecosystem .

What to Look for in an Avatar Maker

With so many options available, choosing the right platform depends on your specific needs.

For performance marketing, prioritize platforms with batch testing, URL-to-video workflows, and AI-driven insights. Creatify excels here with its Aurora model and multi-language support .

For enterprise training, focus on compliance, template libraries, and LMS integration. Synthesia and Colossyan lead with SOC 2 certification and SCORM export .

For quick social content, look for free options like Fotor ($10.99/month paid) or Avatarify for animated effects. D-ID offers photorealistic talking avatars starting at $4.70/month .

For cutting-edge research and development, explore open-source projects like InteractAvatar that push the boundaries of what’s possible .

The Future of Avatar Technology

Several trends will shape avatar development through the remainder of 2026 and beyond.

Real-time matters more than photoreal. Sub-200 millisecond turn-taking, prosody control, and gesture-speech alignment often drive satisfaction more than maximum visual fidelity. Stylized avatars can actually outperform in trust and load time .

Multimodal stacks win deals. Best-in-class solutions combine LLM reasoning, TTS/voice cloning, ASR, lip/face/hand synthesis, and scene control—exposed via APIs and designed for contact-center, LMS, and broadcast workflows .

Compliance becomes a feature. Consent capture, watermarking, provenance metadata, and audience disclosure are becoming table stakes, especially for finance, healthcare, and public-sector deployments .

Edge inference lowers cost and delay. On-device or near-edge voice and animation pipelines reduce cloud egress and latency, enabling kiosks, retail mirrors, in-vehicle assistants, and offline classrooms .

Localization drives ROI. Native-accent TTS, cultural gestures, and script adaptation outperform literal translation. Multilingual avatars expand reach without multiplying headcount .

Making Your Choice

The avatar maker landscape in 2026 offers unprecedented power and flexibility. Whether you need a 15-second talking head for social media, a full-body digital twin for global marketing campaigns, or a research-grade system enabling object interaction, the tools exist to make it happen.

Start by identifying your primary use case, budget, and technical requirements. Experiment with free options like Fotor or D-ID’s trial versions. Then scale up as you discover what works for your specific needs .

The era of static digital representation is over. Today’s avatars can speak, gesture, interact, and evolve alongside us. Your digital twin awaits—and creating it has never been faster, more expressive, or more accessible.